Blindspots in AI vision revealed in study

19 February 2026

What if you took ChatGPT to an eye clinic? These scientists did.

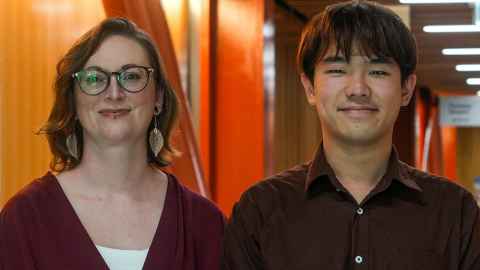

University of Auckland scientists put commercial AI systems – ChatGPT, Claude, Gemini – through clinical tests to investigate how close they are to achieving human-level vision.

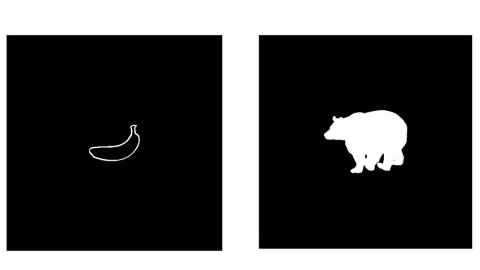

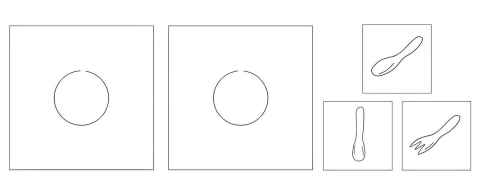

“The AIs were excellent at recognising objects, but bad at performing much simpler-seeming tasks such as comparing the lengths of lines or comparing unfamiliar shapes,” says Dr Katherine Storrs, the senior author of the study.

She runs the Computational Perception Lab in the School of Psychology.

There may be implications for scientists using the AIs as models of human vision and in robotics where detailed spatial understanding, not just object recognition, is crucial, says Storrs.

The scientists put the AI systems through tests a neurologist might use with a stroke patient.

Artificial vision is doing amazing things such as guiding self-driving cars and robots and recognising faces more accurately than humans.

“While these innovations have gotten really good, none of them requires the full range of human visual abilities,” says Gene Tangtartharakul, the PhD student who led the research.

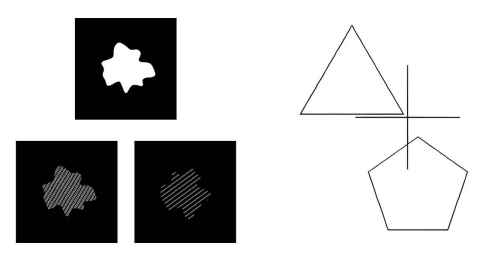

Sometimes we need to judge the orientation of a single edge (is this picture hung on the wall perfectly level?); sometimes disentangle a cacophony of overlapping shapes (is there something hiding inside that bush?); and sometimes recognise semantic categories (is this painting by Cezanne or Picasso?).

Most of the AI tools people know, such as ChatGPT, Claude and Gemini, are both Large Language Models and Visual-Language Models; you can type into the chat box, but also show a photo and ask questions about it.

“They are great at recognising familiar objects, but poor at tasks like making fine-grained judgements about particular parts of an image, or comparing unfamiliar shapes,” says Tang.

The VLMs reached the clinical threshold for visual deficits on over a dozen of the simple and intermediate tests.

“The particular pattern of deficits would be very unusual for a human,” says Storrs. “When people have selective problems with certain visual functions, it’s usually our processing of complex things like faces.”

A human would probably be diagnosed with visual form agnosia, a broad term for difficulties in interpreting visual shapes and patterns, which can be caused by brain damage to the visual cortex, she says.

Usually, however, it also involves difficulties in recognising objects.

There are case reports of people who successfully recognise real-world objects but struggle with seemingly “simpler” tasks like identifying geometric shapes or disentangling two overlapping shapes, which is similar to the pattern of difficulties shown by the VLMs.

“But this is an incredibly rare pattern of deficits in a human,” says Storrs.

One explanation for the VLM limitations is that their training data include plenty of named objects but fewer captions that precisely describe the size, shape, and position of lines in an image – the sort of information needed to perform a lot of the “simpler” visual tasks, she says.

Their article “Visual language models show widespread visual deficits on neuropsychological tests” was published in Nature Machine Intelligence.

Media contact

Paul Panckhurst | Science media adviser

M: 022 032 8475

E: paul.panckhurst@auckland.ac.nz